We are at the very beginning of a fundamental shift in the way that humans communicate with computers. I laid out the beginning of my case for this in my essay The Hidden Homescreen in which I argued that as Internet-powered services are distributed through an increasingly fractured set of channels, the metaphor of apps on a “homescreen” falls apart.

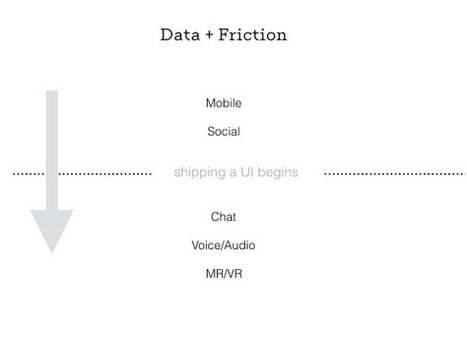

The first obvious application was in chatbots, but as new unique interfaces come online, the metaphor becomes even more important. To understand this shift, it’s worth examining how platform changes have created entirely new businesses and business models. At its heart, it’s about the relationship between the reduction of friction and the resulting increase in data collection.

Research and publish the best content.

Get Started for FREE

Sign up with Facebook Sign up with X

I don't have a Facebook or a X account

Already have an account: Login

Your new post is loading... Your new post is loading...

Your new post is loading... Your new post is loading...

|

|

Very interesting post. User Interfaces have indeed moved into User Experiences and as products/services saturate our free/idle moments, context management and focus on relevance will be indeed core.

Plus we won't manage the dozen of objects in the smart home with dozens of apps and screen interactions. This is where http://hayo.io could step in.